AI site builders are having a moment.

Scroll through any marketing Facebook group, LinkedIn feed, X tweets, or YouTube channel right now, and you’ll find someone showing off a stunning website they built in under an hour. No code, no developer, no page builder plugins.

Just a prompt, an AI, and a finished website that looks like it cost thousands of dollars to design.

It’s genuinely impressive.

But here’s the question most people aren’t asking: what happens when Google tries to crawl the site?

Looking good to human visitors and being visible to search engines are two very different things.

And after watching the vibe-coding wave grow louder in the communities I’m part of, I decided to stop speculating and start testing vibe-coded websites and SEO implementations.

I ran an SEO audit on 20 real vibe-coded websites — sites owned by real marketers, creators, and builders who are actively using and, in some cases, publicly promoting their AI-built sites.

I tested using the Schema.org validator, Semrush, and direct sitemap checks.

To protect the privacy of site owners, I’m not naming anyone or revealing their URLs. But the data doesn’t need names to tell its story.

What I found was eye-opening, not because vibe-coded sites are hopeless, but because of why they’re struggling.

And if you’re a blogger, affiliate marketer, or content publisher thinking about moving your site to an AI website builder, this is exactly what you need to read first.

What Is Vibe-Coding?

Vibe-coding is the practice of building websites and software using AI tools as the primary builder.

Instead of writing code manually or dragging and dropping elements in a page builder, you describe what you want in plain language, and the AI generates the site for you.

The AI handles the HTML, CSS, JavaScript, and in some cases, the hosting setup; all without you touching a single line of code.

Several AI-powered site-building platforms have emerged to make this possible, and adoption has exploded among marketers, solopreneurs, and creators who want to move fast without hiring a developer or learning a Content Management System like WordPress.

And to be fair, the results are often stunning. Vibe-coded sites tend to be fast-loading, visually polished, and deployable in a fraction of the time it takes to build a traditional site.

For certain use cases, speed and aesthetic quality are a genuine advantage.

Those use cases include portfolio sites, personal branding pages, product landing pages, and minimum viable product (MVP) builds, where you’re testing an idea before committing to a full infrastructure build.

If SEO-driven organic traffic is not your primary growth channel, a vibe-coded site can absolutely serve you well.

The problem starts when business owners and content publishers, people who depend on Google to send them traffic every single day, treat vibe-coded platforms as a direct replacement for a mature CMS like WordPress.

That’s where the gap between looking good and ranking well becomes impossible to ignore.

How I Ran the Audit

The audit was straightforward, and the tools I used are the same ones you and SEO professionals rely on daily.

Tool 1: Schema.org Validator (validator.schema.org)

Schema markup is structured data embedded in a website’s code that helps search engines understand what a page is about.

The Schema.org validator crawls a URL, reports the structured data it finds, and flags any errors or warnings in the implementation.

This is different from Google’s Rich Results Test, and that distinction will matter later in this article.

For now, what’s important is that the Schema.org validator reads the raw HTML of a page. Exactly the way a search engine crawler sees it on first contact.

Tool 2: Semrush Domain Overview

Semrush is one of the most widely used SEO platforms in the industry.

The Domain Overview report gives a snapshot of a site’s organic search performance, including estimated monthly traffic, total ranking keywords, backlink profile, and traffic trend over time.

Tool 3: Direct Sitemap Checks

For each site, I also checked the sitemap directly by navigating to the standard sitemap URL (yourdomain.com/sitemap.xml) and, where access was possible, cross-referencing it with Google Search Console data.

This revealed a third category of SEO failure that I hadn’t originally set out to test.

What I Was Looking For

These three tools together answer a fundamental question: Does Google know what this site is about, can it find the content, and is it rewarding the site with organic traffic?

All sites audited are owned by real people. No names, no URLs, just the data and what it reveals.

The Schema Markup Findings: It’s Not the Tool, It’s the Knowledge

PRO TIP!

Let’s start with something important: vibe-coded platforms are not inherently incapable of supporting schema markup.

Some of the sites I tested had schema present.

That finding changed how I framed this entire section, because the real problem isn’t the tool. It’s the knowledge gap between what the platform can do and what the person building the site actually knows to configure.

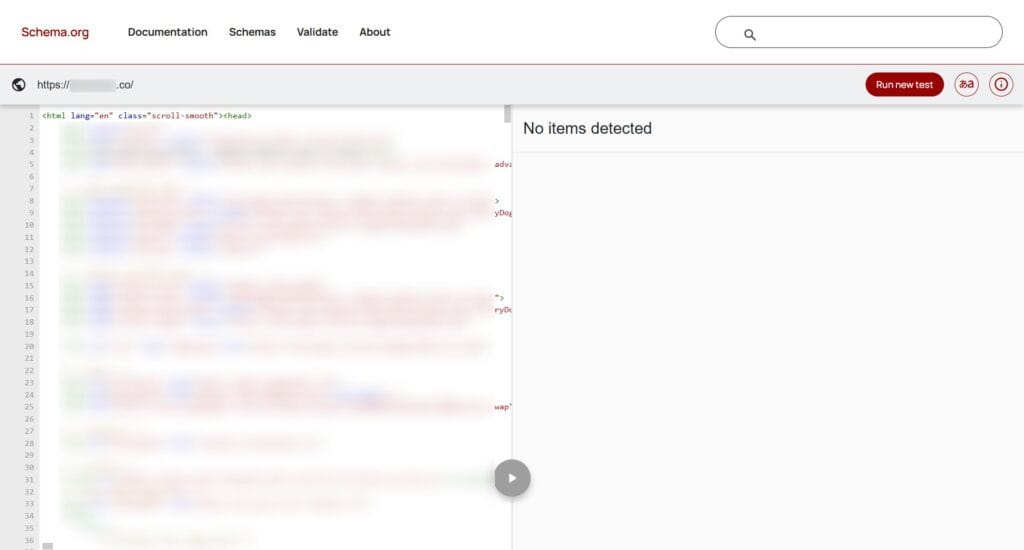

Finding 1: Sixteen out of 20 original sites had zero schema markup

Sixteen of the twenty sites I initially tested returned no structured data whatsoever on the Schema.org validator.

No Website schema. No Organization schema. No Article, Author, or any other markup. Nothing.

For Google, this means every one of those pages is a blank slate from a structured data perspective.

The crawler can read the text, but it has no machine-readable signals telling it what type of content the page contains, who wrote it, what the site is about, or whether it should be eligible for rich results in search.

Finding 2: Schema is possible, but only if you know what you’re doing

When I tested additional vibe-coded sites outside the original twenty, a different picture emerged.

Some of those sites had schema markup present. But none of them were clean. Everyone returned warnings or errors on the validator.

This is the critical nuance.

The AI site builders platform didn’t automatically produce a correct, complete schema. The user had to know enough to implement it, and in every case, the implementation had gaps.

PRO TIP!

In other words: having a schema is better than having none, but a broken schema is its own problem.

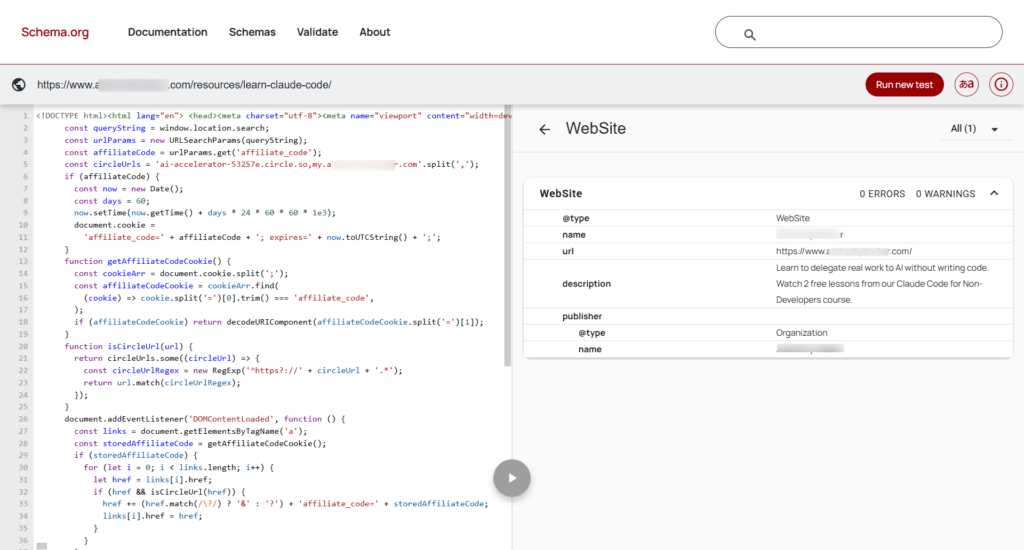

Finding 3: The authority site had schema, but it was barely there

One of the sites I audited belongs to a well-known authority blogger, podcaster, and YouTuber with a significant following (80K audience) and 15 years of marketing experience.

This site had Website and Organization schema markup, which put it ahead of the majority. But what it had was the bare minimum: a generic Website schema type with a name, homepage URL, description, and a publisher block.

NOTE:

Regardless of which page you tested, the Schema markup data is the same across the entire site.

For a content-heavy site publishing articles, reviews, courses, and guides, that’s the equivalent of handing Google a business card when it asks for a full portfolio.

The pages were missing Article schema, Author schema, BreadcrumbList, and any page-level structured data that would tell Google what each individual piece of content actually is.

Finding 4: Six sites returned “URL not found”, despite loading perfectly in the browser

Six sites loaded without any issues in a regular browser. Google’s Rich Results Test returned a 200 OK status for them. But the Schema.org validator couldn’t find the URLs.

Here’s why that happens.

Most AI site-building platforms, including Replit, Bolt, Lovable, B12, and Base44, commonly generate sites built on modern JavaScript frameworks such as Next.js, Astro, and Nuxt.

On many of these platforms, schema markup is not included in the raw HTML delivered by the server. Instead, it’s injected into the page by JavaScript after the page loads in the browser.

The Schema.org validator is a lightweight crawler. It fetches raw HTML and reads what’s there. It does not execute JavaScript.

So if the schema only appears after JS runs, the validator either finds nothing or throws a rendering error.

Google’s Rich Results Test behaves differently. It uses a headless browser that renders JavaScript before reading the page, which is why it returns 200 OK even when the Schema.org validator fails.

But here’s where the real SEO risk lies: Googlebot also renders JavaScript, but not instantly or equally across every page.

When Googlebot first crawls a URL, it processes the raw HTML. The JavaScript-rendered version gets queued for a second crawl, which may happen hours, days, or weeks later.

For lower-priority pages, that second pass may not happen consistently at all.

This means your schema markup could be invisible to Google on first contact, and you’d never know, because the Rich Results Test told you everything was fine.

What a proper schema looks like on a content site

For context, here’s what a well-configured content site should have:

None of the vibe-coded sites I tested came close to this standard. And unless the person building the site already knows this list exists and knows how to implement each type correctly, their AI builder will not prompt them to do so.

That is the knowledge gap.

The Organic Traffic Reality

Schema markup is about giving Google the right signals. Organic traffic is about whether Google is responding.

And when I pulled the Semrush Domain Overview data for all 20 sites, the traffic picture was just as revealing as the schema findings.

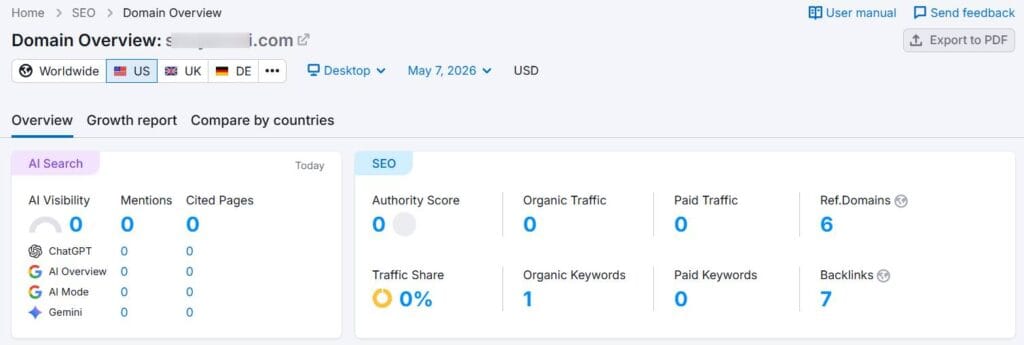

Finding 1: 17 out of 20 sites had between 0 and 10 organic visitors per month

Seventeen of the twenty vibe-coded sites I audited were essentially invisible on Google at the time of testing.

Semrush reported organic traffic numbers ranging from zero to single digits, meaning Google is either not indexing these sites meaningfully, not ranking their pages for any significant keywords, or both.

For context, these aren’t brand new domains registered last week.

These are sites owned by active marketers and creators who are building in public, sharing their work online, and in some cases positioning themselves as voices in the AI and digital marketing space.

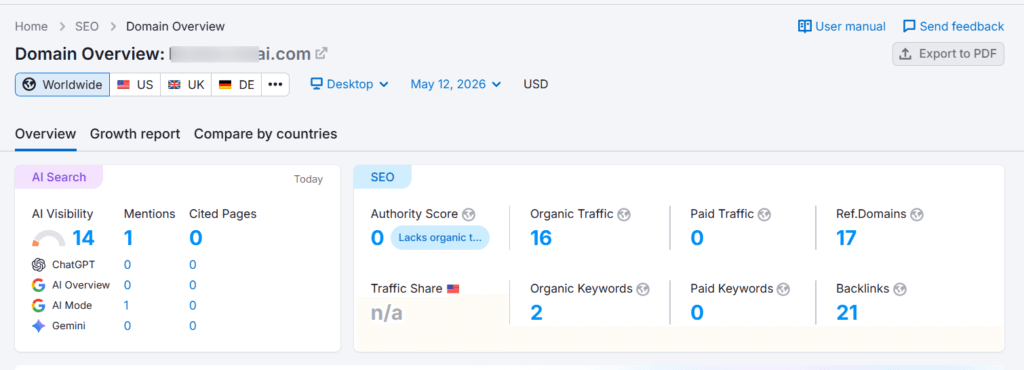

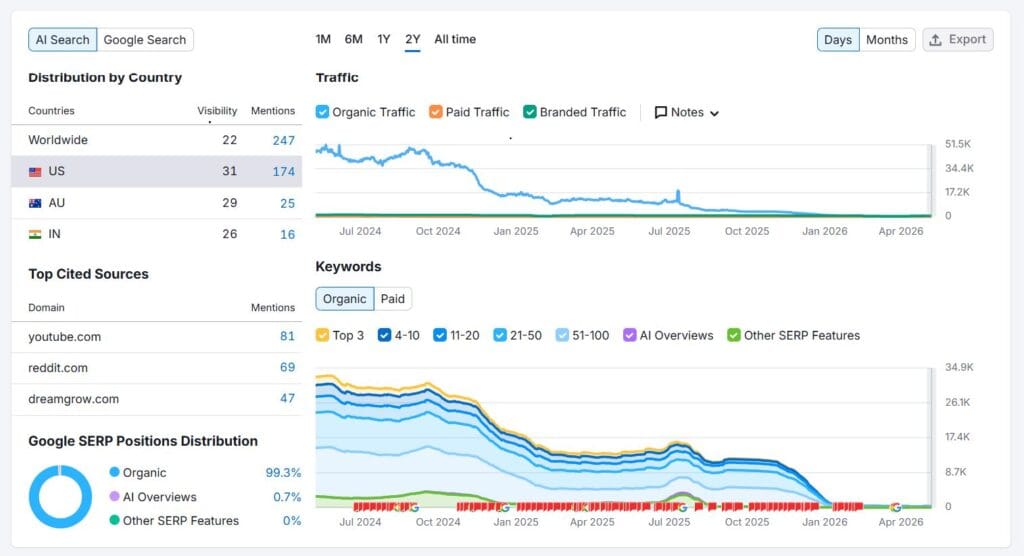

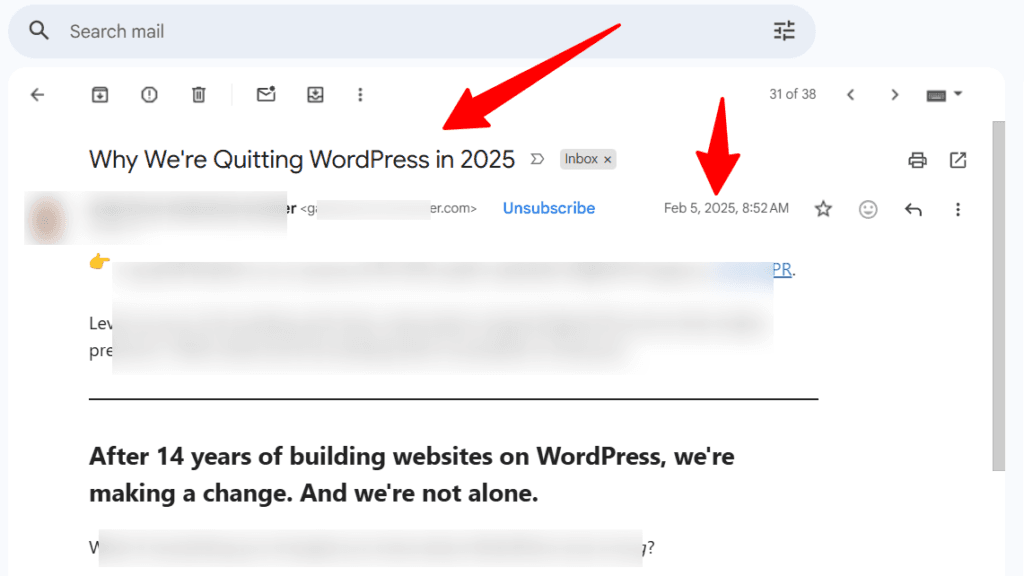

Finding 2: The authority site; an 82% traffic collapse in six months

The authority site I mentioned earlier, owned by a well-known marketer, experienced a significant decline in traffic.

Here is what Semrush showed:

That is an 82% collapse in organic traffic over roughly six months. To put that in business terms, if even 1% of that lost traffic was converting to clicks or sales, that’s a significant monthly revenue impact.

IMPORTANT NOTE:

Whether that traffic decline is specifically related to the AI site they migrated to is uncertain, but in February 2025, they sent the following email to their subscribers. And the major decline in traffic started between July and October, 2025. The timing of that email and the decline traffic pattern is hard to ignore.

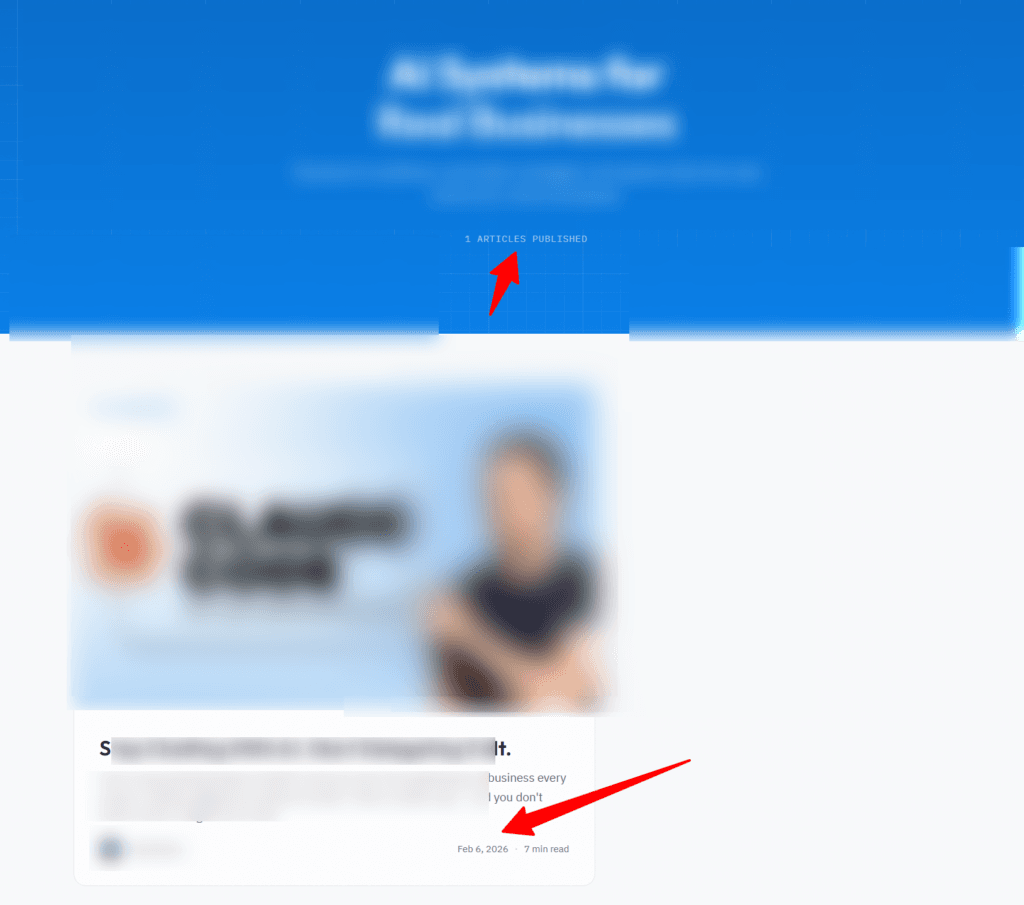

Finding 3: The blog architecture problem

Digging deeper into the authority site revealed something that helps explain a large part of the traffic collapse. The site’s actual blog page had only one published post, dated February 2026.

This doesn’t mean the site owner stopped publishing content entirely. They are still publishing content to static inner pages across the site.

But the blog post publishing system appears to be either broken, abandoned, or too technically difficult to use consistently in the backend.

Why does that distinction matter so much for SEO?

A properly functioning blog post system does far more than just display content. Every time a new post is published through a CMS:

When content goes to static inner pages instead of through the blog post publishing system, most of those signals are absent or weakened.

Google sees a site that isn’t being updated through its primary content channel, and it adjusts its crawl behavior and ranking confidence accordingly.

Why Vibe-Coded Sites Struggle With SEO: The Real Reasons

The audit data paints a clear picture. But data without explanation only tells half the story.

To understand why vibe-coded sites perform the way they do in search, you need to understand what’s happening under the hood.

NOTE:

This is not about capability. Most modern AI site builders are technically capable of producing SEO-friendly output.

The problem is a combination of platform immaturity, missing guardrails, and, most critically, a knowledge gap that the tools themselves do not close.

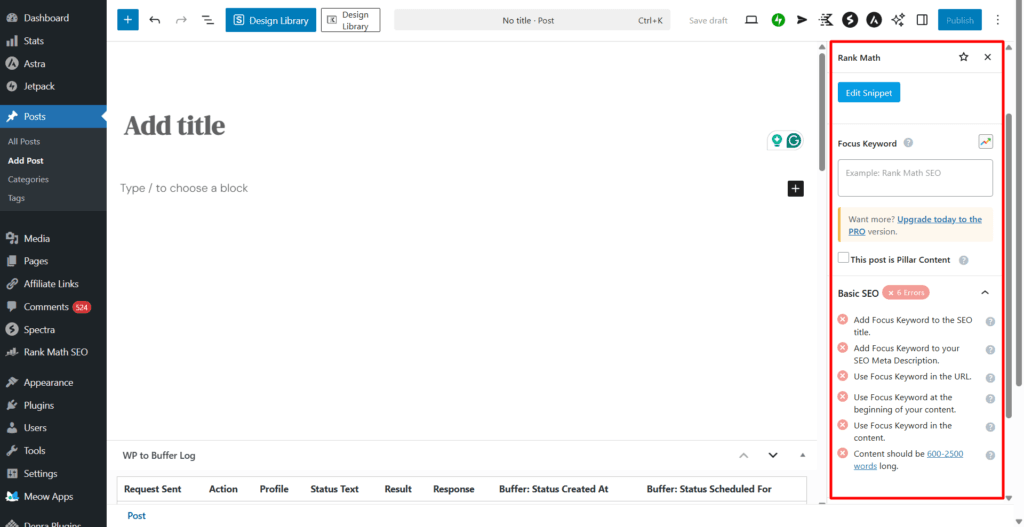

Reason 1: Schema markup is builder-dependent, not automatic

Whether a vibe-coded site has schema markup depends almost entirely on whether the person building it knows to ask for it, which schema types their pages need, and how to verify that the implementation is correct.

WordPress with Rank Math or Yoast handles this automatically.

On most vibe-coded platforms, you get what you build, and if you don’t know what to build, you get nothing.

Reason 2: JavaScript rendering creates crawl inconsistency

Most vibe-coded websites are built with modern JavaScript frameworks like Next.js, Nuxt, or Astro.

While these frameworks are technically advanced, they introduce a layer of complexity that often creates a disconnect between what you see in your browser and what a search engine bot sees during its initial visit.

The core of the problem lies in a process called hydration. When a bot or user visits a JS-heavy site, the server often sends a relatively empty HTML shell.

The browser then downloads the JavaScript files and “hydrates” that shell, turning it into a fully functional, interactive page.

For SEO, this creates three distinct hurdles:

In a mature environment like WordPress, the HTML is Server-Side Rendered (SSR) by default. Every word, link, and meta tag is present in the raw source code the moment the server responds.

With vibe-coding, unless you are an expert developer who knows how to configure “Static Site Generation” (SSG) or “Edge Rendering,” you are essentially publishing content that Google may not fully see, index, or trust on first contact.

Reason 3: Blog and CMS architecture are often absent or broken

A vibe-coded site is, at its core, a collection of static or semi-static pages.

Building a functional blogging system, one with a proper post pipeline, RSS feed, category taxonomy, pagination, and archive pages, requires deliberate technical setup that most AI builders don’t provide out of the box, yet.

Reason 4: Static pages don’t earn the same crawl frequency as active blogs

Google allocates crawl budgets based on signals of freshness and activity.

A WordPress blog that publishes regularly, updates its RSS feed, and builds internal links tells Google to come back often.

A static site that rarely changes gives Google no reason to prioritize it in the crawl queue.

Reason 5: There are no SEO guardrails

When you write a post in WordPress with Rank Math active, for example, the plugin actively guides you. It tells you if your focus keyword isn’t in the title, warns you if your meta description is too long, flags thin content and missing alt text.

Vibe-coded platforms have none of that. There is no plugin watching over your shoulder, no pre-publish checklist, no score. You publish, and you find out weeks later from your SEO tool that something went wrong, if you’re even checking.

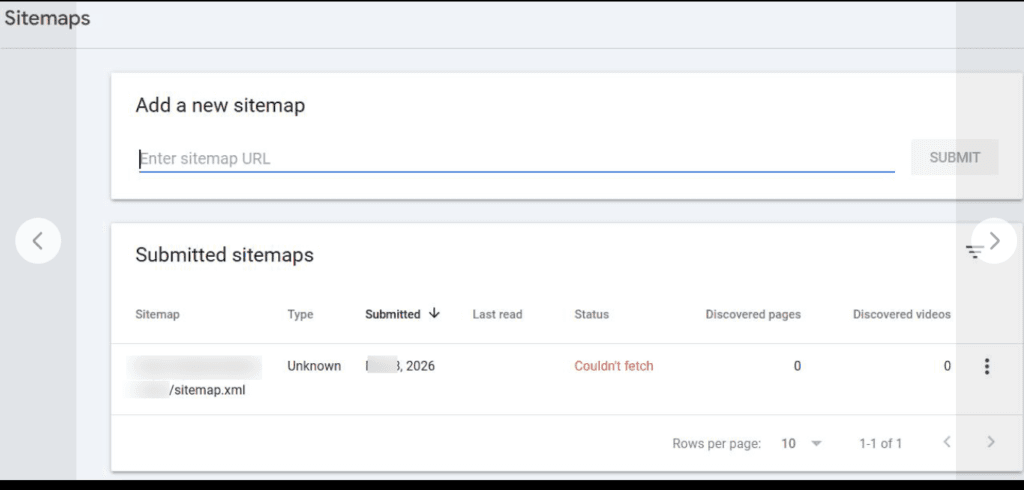

The Sitemap Problem: When Google Can’t Find Your Content

One finding from the audit deserves its own section, because it turned out to be far more widespread than I initially expected.

An XML sitemap is one of the most fundamental SEO infrastructure elements for a website. It’s a file that lists all the URLs on your site and tells Google what’s on your site, how often it changes, and which pages are most important.

Without it, Google has to discover your content entirely on its own through crawling and internal links. A slower, less reliable process, especially for newer or recently migrated sites.

Across close to 20 AI-built sites I tested, not a single one had a working XML sitemap. Many of the sitemap URLs returned a 404 error: “Not Found” page.

This isn’t an edge case; it’s a pattern.

Three sitemap failure modes found

The audit revealed three distinct ways vibe-coded sites are failing at the sitemap level:

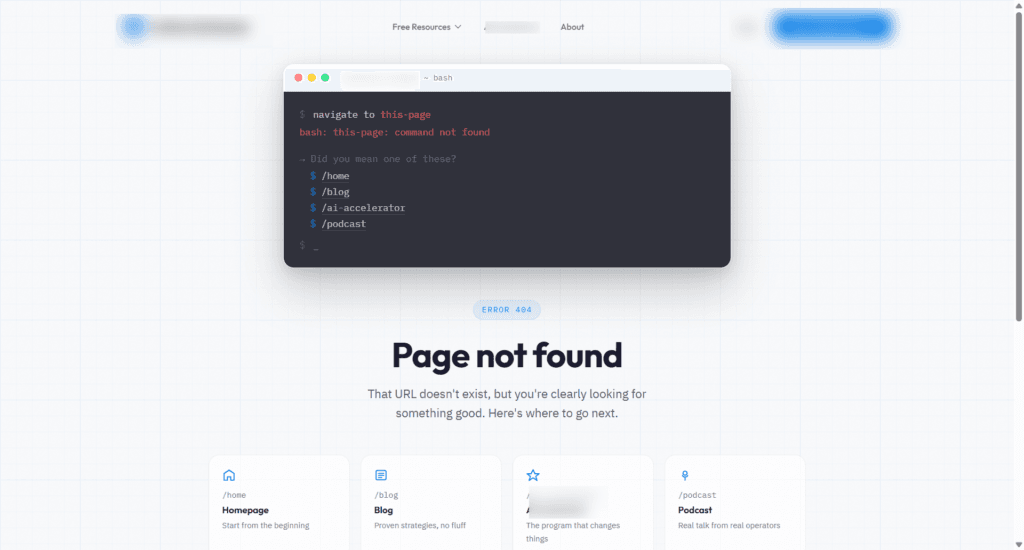

- Missing sitemap (404): The most common failure. The sitemap URL simply doesn’t exist. Navigating to yourdomain.com/sitemap.xml returns a 404 error page. Sometimes, a beautifully designed one, which only underscores the irony. The site owner has invested in aesthetics, while a basic crawlability requirement is missing entirely.

- Broken sitemap (Google couldn’t fetch): One site had actually submitted a sitemap to Google Search Console, but Google reported “Couldn’t fetch” with 0 discovered pages and listed the sitemap type as “Unknown.” The sitemap exists in name only. Google tried, failed, and gave up. The site owner may never have noticed, because the submission itself looked like progress.

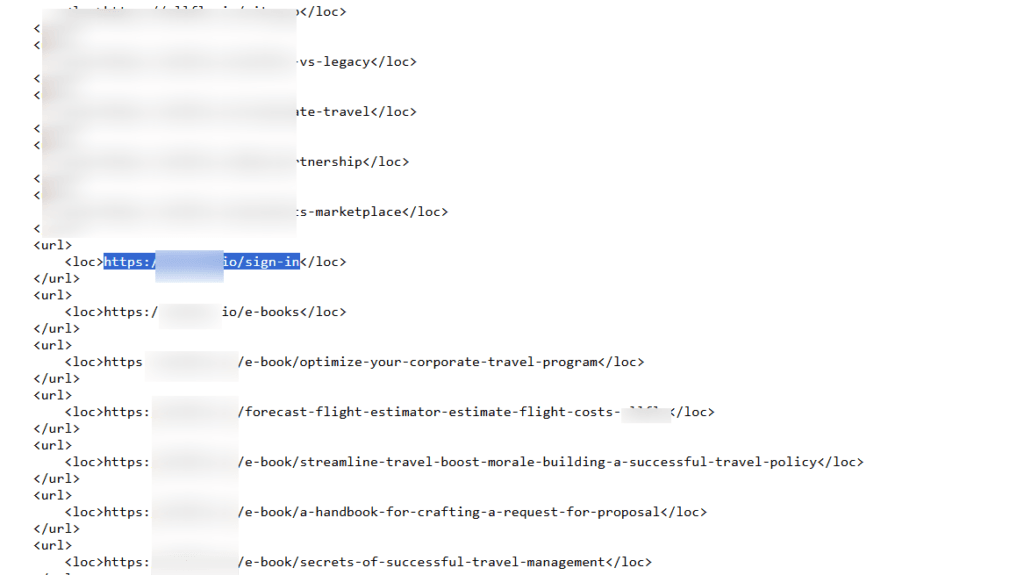

- Polluted sitemap (wrong pages included): Another site had a working sitemap, but it included the /sign-in page among its submitted URLs. A login page has no business being indexed by Google. Submitting it wastes crawl budget, creates potential duplicate content issues, and signals that nobody with SEO knowledge reviewed what the sitemap was actually submitting.

Why does this compound every other SEO problem

The sitemap failure doesn’t sit in isolation; it amplifies every other issue in this audit. A site with no schema, no active blog, and no working sitemap is giving Google almost nothing to work with.

No structured data signals. No content freshness signals. No structured URL inventory. Google is being asked to rank a site it can barely find.

On WordPress, sitemap generation is handled automatically. Rank Math, Yoast, and most SEO plugins automatically generate, update, and submit your sitemap.

On vibe-coded platforms, it’s yet another SEO requirement that only gets done if the user knows it’s needed.

Interesting Fact!

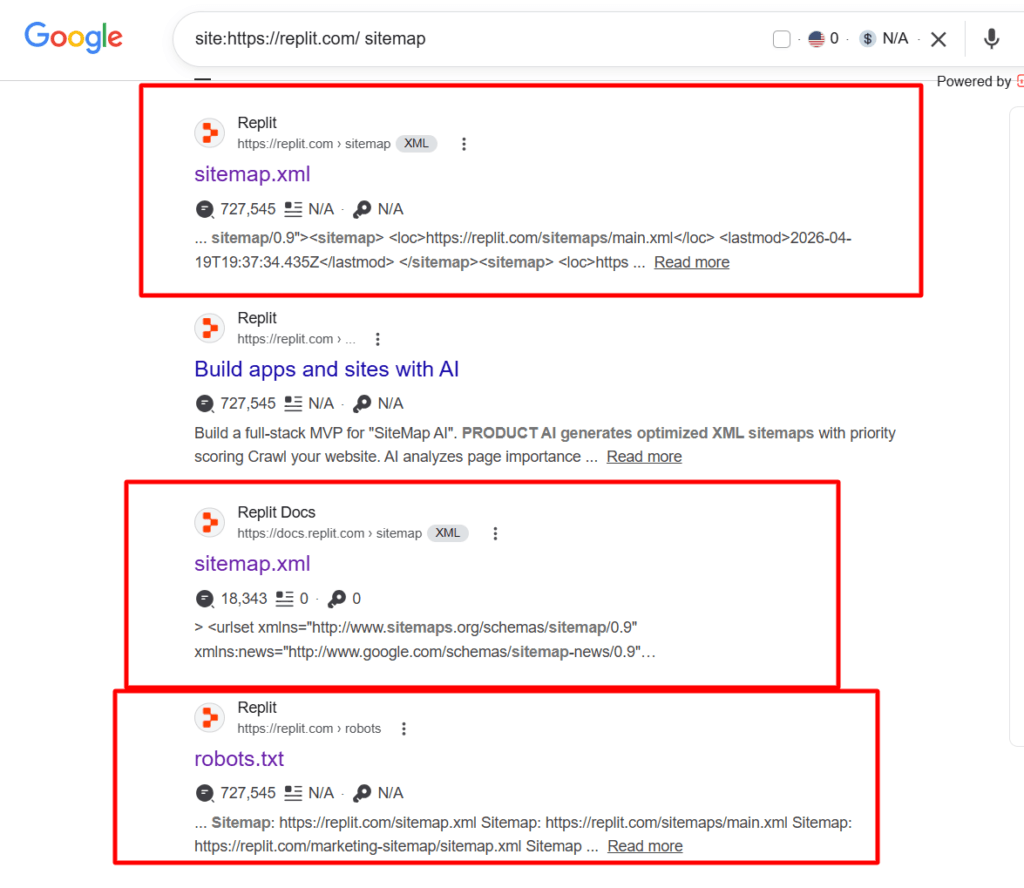

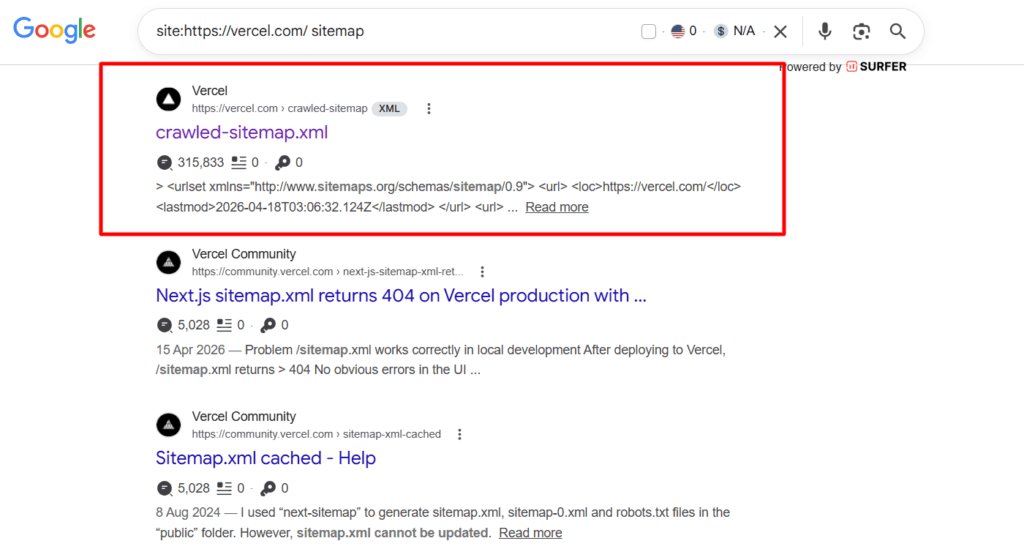

While I was writing this article, I stumbled upon an interesting Google search result that shows Replit and Vercel, two of the leading AI coding and site-building platforms, have their own sitemap.xml and robots.txt files indexed and appearing in Google search results.

The site: search operator only returns pages Google has already crawled and indexed, so this is not speculation or inference. Google has confirmed these technical files are actively sitting in its index and being served as search results.

That is a confirmed crawl configuration oversight, not a possibility.

Even the companies building AI site tools are not immune to the same technical SEO gaps their users struggle with. SEO infrastructure requires deliberate, knowledgeable attention regardless of how sophisticated the underlying technology is.

Why You Should Stay on WordPress (For Now)

Let me be clear about what this section is not. It’s not a declaration that WordPress is perfect, that it will always be the best option, or that vibe-coding platforms have no future in serious web publishing.

Neither of those things is true.

What this section is about is platform maturity, and making honest, data-informed decisions about which tool is right for the job you’re trying to do “today”.

What WordPress gives you that vibe-coded platforms don’t yet

Who should stay on WordPress right now?

If any of the following describes you, this is not the time to migrate a business site to a vibe-coded platform:

When Vibe-Coding Makes Sense

Despite everything the audit revealed, it would be intellectually dishonest to dismiss vibe-coding entirely.

For the right use cases, it is exactly the right tool for the job.

Conclusion

Vibe-coding is not the enemy of good SEO. Ignorance is.

The audit data in this article isn’t an argument against AI site builders as a technology. It’s an argument against using any tool without understanding what it does well, what it doesn’t do at all, and what that gap will cost you.

The pattern across the sites audited is clear.

Beautiful sites with zero organic traffic. An established site that lost 82% of its search traffic in six months. Schema markup either completely absent, riddled with warnings, or too minimal to communicate anything useful to Google.

Blog architectures that aren’t functioning the way they should. Across nearly 20 tested sites, not a single working XML sitemap.

None of that happened because the platforms are hopeless. It happened because their job is to build and deploy your site, and most of them do that remarkably well. Fast, beautiful, and functional in hours.

The problem is that most users of AI site builders didn’t know enough to configure manually what the platform wasn’t built for.

That knowledge gap is the real story.

WordPress isn’t perfect. But for bloggers, affiliate marketers, and content publishers who depend on Google traffic, it remains the most battle-tested, SEO-ready platform available today.

Vibe-coding platforms are improving, but we’re not there yet.

Watch the space. Keep learning. But make your platform decisions based on what the data shows today, not what the hype promises tomorrow.

Google does not rank beautiful websites. It ranks websites that it can crawl, understand, and trust.